Published: March 31, 2026

But Where Are You Going to Run All of Those Tests?

Something interesting is happening in QA teams right now. AI agents and MCP-powered tools are letting testers author automation faster than ever before. What used to take a week of scripting can be roughed out in an afternoon. Test coverage that once felt aspirational is suddenly within reach. It’s genuinely exciting. And it’s quietly creating a problem nobody is talking about.

When test authoring gets faster, the conversation almost always stays on the authoring: which tool to use, how to structure prompts, how to get reliable locators. What nobody stops to ask is the question that actually determines whether any of it ships.

Where, exactly, are you going to run all of those tests?

AI can generate hundreds of new tests in the time it used to take to write ten. That’s real leverage. But leverage amplifies whatever is underneath it, including a setup that was never designed to handle the load. The teams discovering this right now aren’t failing at automation. They’re succeeding at it faster than their execution layer can keep up.

Building automation is a milestone. Executing it reliably, at scale, is a different problem entirely.

The Pattern Almost Every Team Follows

It usually starts simply enough. A few Android devices on someone’s desk. An iPhone shared between two engineers. Appium scripts running from a laptop before a release. It works. And for a while, it’s enough.

Then the scope grows. More device models, more OS versions, cross-browser coverage, multiple teams needing to run tests in parallel. And almost overnight, the constraint shifts. The question stops being “Can we automate this?” and becomes “Do we have the execution capacity to actually run this?” Most teams aren’t prepared for that moment, not because they didn’t see automation coming, but because the execution problem sneaks up quietly.

What “Scale” Actually Looks Like

At a certain point, the volume of tests stops being the hard part. It’s everything around them: the device fragmentation, the OS combinations, the browser version drift, teams across time zones all reaching for the same device pool at once. The tests keep growing. The foundation wasn’t built for that.

And that’s before the harder scenarios come into play: accessibility flows that behave differently on real hardware, carrier-dependent features on a Wi-Fi only emulator simply won’t surface, and connected car integrations for Android Auto or CarPlay that can’t be approximated on a desktop. These aren’t edge cases. They’re real parts of real apps that most execution environments quietly fail at.

This is what execution debt looks like in practice: the QA team stops writing tests and starts managing OS updates, debugging capacity conflicts, and fielding complaints about flaky results that turn out to be setup problems. Automation that should be accelerating releases ends up generating its own overhead.

The Real Problem Isn’t Owning Devices — It’s Building the Layer on Top

Many teams already own their devices, and that’s fine. The devices aren’t the problem. The problem is everything that has to be built around them: the orchestration, the integrations, the access controls, keeping it all running reliably as OS versions change and hardware ages. That’s not a one-time setup. It’s ongoing work that someone has to own.

When teams try to build that management layer themselves, it quietly becomes a second engineering product with no roadmap, no dedicated owner, and a habit of breaking at the worst possible moment. That’s the tax nobody signed up to pay.

Owning devices is fine. Building your own device management platform from scratch is where the real cost hides.

What the Right Platform Actually Solves

When QA leaders start evaluating execution platforms, the conversation usually starts and ends with device availability and CI integration. Those matter, but they’re the baseline. The more important question is whether the platform was built for the full reality of what your app actually needs to test.

Start with deployment. Some teams want devices on demand without any procurement overhead, and a cloud option handles that. Others already have a device lab and need the right software layer to make it work at scale, and an on-premise option handles that. The choice shouldn’t force a trade-off between convenience and control. A platform worth evaluating should support both, with the same capabilities either way.

Groupe BPCE, the second largest banking group in France, took exactly this path. They had inconsistent manual testing across multiple locations, no traceability, and a team that needed centralized execution without giving up their own devices. With an on-premise deployment, they now run 700 users across 102 devices and 32 browser versions, with tests running every 5 to 15 minutes around the clock. Read the full case study.

But deployment flexibility is just the starting point. The harder thing to evaluate — and the thing most teams don’t ask about until it’s too late — is depth of coverage.

Think about the scenarios mentioned earlier. Accessibility flows that depend on TalkBack or VoiceOver need to run on real devices, because that’s the only environment where screen reader behavior is accurate. Performance under real-world conditions like CPU pressure, memory load, and battery drain only surfaces on physical hardware. Audio-dependent features, carrier flows that assume a SIM is present, automotive integrations for Android Auto or CarPlay: these are real parts of real apps, and most platforms either don’t support them or treat them as niche add-ons.

So when evaluating a platform, go beyond “can it run our Appium tests” and ask whether it can handle the scenarios your team has been quietly deferring. Can it run those tests across geographies without you standing up regional labs? Does it fit into your CI pipeline so a merged PR moves through build, upload, execution, and reporting without anyone manually shepherding it?

The teams that get this right don’t just remove operational overhead. They unlock a level of coverage they couldn’t justify before, not because the tests were too hard to write, but because there was nowhere reliable to run them.

The Question Worth Asking Now

Before your next automation planning session, before the next sprint review where someone celebrates hitting 80% coverage, ask the harder question.

“Where are we going to run all of these tests six months from now?”

Because the math is unforgiving:

100 tests can run almost anywhere. 5,000 tests need orchestration. 50,000 tests — covering functional, accessibility, performance, audio, carrier, and connected car scenarios — need architecture.

The form it takes — cloud, on-premise, or hybrid — is up to you. But the teams shipping confidently at scale all have one thing in common: they asked the execution question early enough to answer it well.

You Might Also Like

But Where Are You Going to Run All of Those Tests?

Something interesting is happening in QA teams right now. AI…

Virtual vs Real Devices: What Actually Matters in Mobile Testing

If you’ve spent any time testing mobile apps, you already…

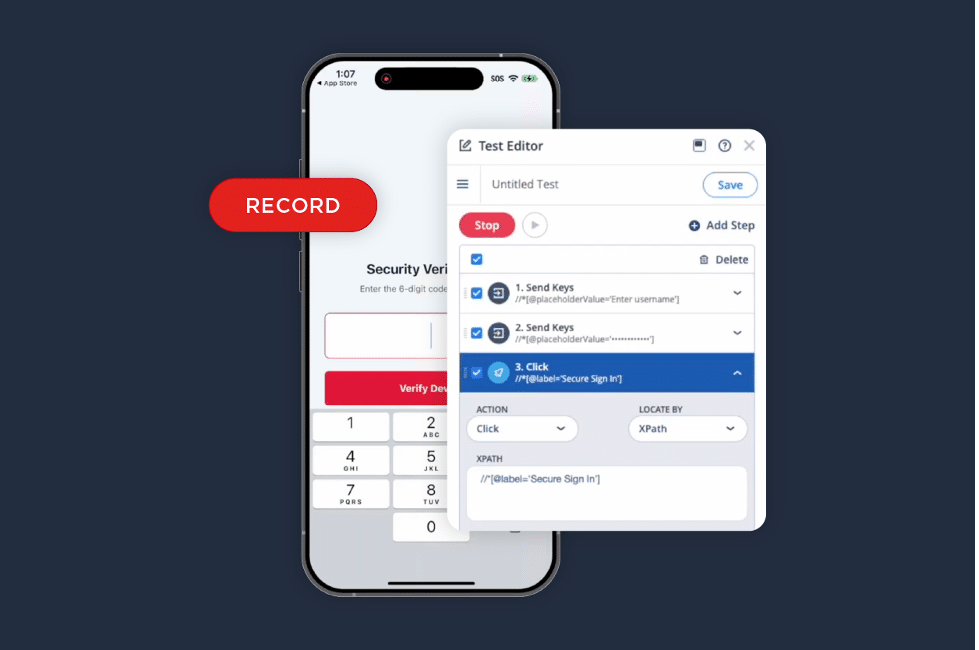

iOS Test Recorder: A Faster Way to Turn Validation into Automation

We listened to your feedback. The iOS Test Recorder is…