Published: March 25, 2026

Virtual vs Real Devices: What Actually Matters in Mobile Testing

If you’ve spent any time testing mobile apps, you already know the checklist never really ends:

- Does the app work?

- Is it fast enough?

- Does it behave consistently across devices, screen sizes, OS versions?

- Does it meet accessibility standards?

- Is it secure?

- Does it feel right to the user?

And somewhere along the way, you run into the classic question:

Should I test on real devices, or are virtual devices good enough?

The honest answer? It depends. But not in a vague, unhelpful way. It isn’t either-or. It’s about understanding where each fits—and more importantly, where each falls short.

Virtual Devices: Fast, Convenient… and Slightly Misleading

When people talk about virtual devices, they usually mean simulators and emulators. While they’re often used interchangeably, there’s a subtle but important difference.

Simulators focus on replicating app behavior and UI using your machine’s hardware, which makes them extremely fast. Emulators go a step further by trying to mimic actual device hardware, which makes them more realistic—but also slower and heavier.

Here’s a simple way to look at it:

| Feature | Simulator | Emulator |

| Hardware Simulation | ❌ No | ✅ Yes |

| Performance | ⚡ Fast | 🐢 Slower |

| Accuracy | Medium | High |

| CPU Architecture | Host machine | Emulated (ARM, etc.) |

| Use Case | UI, basic testing | System, integration, edge cases |

This is why iOS testing feels smoother (simulators), while Android testing feels more “real” but resource-intensive (emulators).

Why Virtual Devices Are So Widely Used

There’s a reason almost every team relies heavily on virtual devices—they make testing fast and scalable. You can spin up devices instantly, run tests in parallel, and integrate them seamlessly into CI/CD pipelines. For early-stage development, debugging, and regression testing, they’re incredibly effective.

More importantly, they help teams move quickly without needing to maintain a massive physical device lab. And for a big chunk of testing—especially UI validation and functional flows—they’re generally sufficient.

But Here’s the Catch: Real Users Don’t Use Virtual Devices

This is where things start getting interesting.

Modern users are extremely sensitive to performance. According to research, over 50% of mobile users abandon experiences that take longer than 3 seconds to load. On top of that, nearly half of users will uninstall an app if it performs poorly or feels slow.

Now think about this in the context of virtual devices.

They don’t accurately simulate:

- Battery drain

- Thermal throttling

- GPU rendering behavior

- Real memory pressure

Now layer accessibility on top of that.

Users who rely on assistive technologies—screen readers, voice navigation, larger fonts, or high-contrast modes—are even more sensitive to poor experiences. And this is where virtual devices start to show limitations.

They don’t fully replicate:

- Real screen reader behavior (like TalkBack or VoiceOver nuances)

- Gesture-based navigation patterns used by accessibility services

So, while your app might technically “pass” accessibility checks and look perfectly fine overall on a virtual device, it may still feel broken or frustrating for real users.

The Gaps Become Obvious in Real-World Scenarios

As soon as you move beyond basic functionality, virtual devices start showing cracks.

Take network behavior, for example. Mobile users don’t operate on stable, high-speed connections all the time. Fluctuating signals, carrier-specific quirks, and latency spikes are part of everyday usage. Virtual devices struggle to replicate this.

Then there are processors & sensors— CPUs, GPUs, GPS, biometrics, camera, and motion data. These are often mocked or approximated, which works for basic validation but doesn’t fully reflect real-world conditions.

Performance testing is another area where things can get misleading. You might get clean numbers on a virtual device, but those numbers don’t always translate to actual user experience. In reality, even a 1-second delay can reduce conversions by up to 7%, and users expect apps to respond within 1–2 seconds at most.

That’s a very tight margin for error—one that virtual environments don’t always help you measure accurately.

The Security Angle Most Teams Overlook

One area that often gets overlooked in this conversation is security.

Modern mobile apps frequently include protections like root or jailbreak detection, emulator detection, and anti-tampering mechanisms. These are designed to prevent reverse engineering and abuse, but they also introduce an interesting side effect—many secured apps won’t run properly on virtual devices at all.

This creates a subtle but serious problem. If your testing strategy relies heavily on virtual devices, you may end up validating an unprotected version of your app or skipping validation after security controls are applied. Either way, you’re not testing what your users will actually experience in production.

This becomes even more critical in environments where app protection, obfuscation, or runtime security controls are part of the release pipeline. The gap between “tested” and “shipped” can grow wider than most teams realize.

See how Digital.ai Testing can help you test hardened applications.

Why Real Devices Still Matter

At the end of the day, users interact with real devices in unpredictable conditions. That’s something you can’t fully simulate.

Real devices help uncover:

- Device-specific bugs

- Performance issues under real load

- Battery and memory-related problems

- Network-dependent failures

- Accessibility issues

- Security-related behavior

They give you confidence—not just that your app works, but that it works where it matters.

So, What’s the Right Approach?

The most effective teams don’t choose between virtual and real devices—they combine them thoughtfully.

A simple way to think about it:

- Use virtual devices when you need speed, scale, and fast feedback

- Use real devices when accuracy, performance, and user experience matter

Or more practically:

If your test outcome could change based on hardware, network, or security conditions—you should be running it on a real device.

Final Thoughts

Virtual devices are fast, cost efficient, and essential for modern development workflows. Real devices are expensive and harder to manage, but they reflect reality far more accurately.

And in mobile testing, reality is what ultimately matters.

The goal isn’t to pick one—it’s to strike the right balance between speed and confidence. Teams that get this right don’t just ship faster—they ship better.

Here are some resources around performance testing, testing of secured apps and how a model like Shared Devices (hybrid combination of Private vs Public Devices) can fit in the picture:

- https://digital.ai/catalyst-blog/performance-testing-for-mobile-beyond-just-is-it-fast/

- https://digital.ai/catalyst-blog/the-invisible-wall-why-secured-apps-break-test-automation/

- https://digital.ai/resource-center/guides/quick-start-guide-testing-hardened-mobile-apps/

- https://digital.ai/catalyst-blog/shared-not-exposed-how-testing-clouds-are-being-redefined/

You Might Also Like

Virtual vs Real Devices: What Actually Matters in Mobile Testing

If you’ve spent any time testing mobile apps, you already…

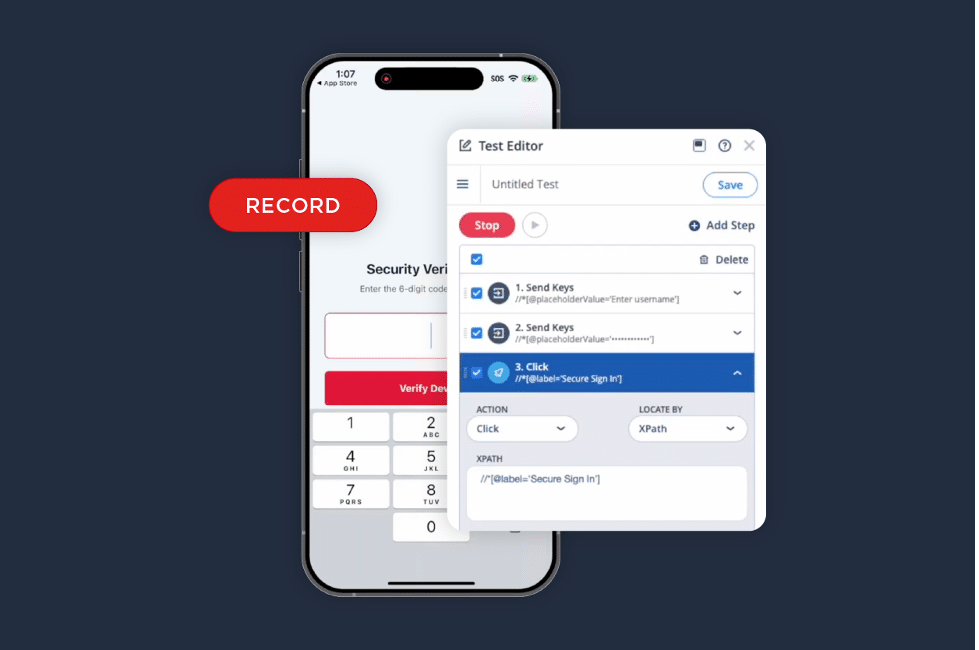

iOS Test Recorder: A Faster Way to Turn Validation into Automation

We listened to your feedback. The iOS Test Recorder is…

Start Testing Samsung Galaxy S26 Series Today with Digital.ai Testing

Samsung has officially introduced the Galaxy S26 series, continuing its lineup…