Published: March 17, 2026

Accelerating Test Creation using LLMs

Manual Testing Bottleneck

Over the past couple of decades, software testing has gone through a pretty significant evolution. Not too long ago, most testing was completely manual. A single release cycle could take anywhere from a week to a month—and in some cases even longer—just to validate an application before it went out the door. As you can imagine, this often became a major bottleneck in the delivery pipeline.

Then automation started gaining traction.

Rise of Automation

First came proprietary frameworks like SeeTest, and later the open-source ecosystem exploded with tools like Appium and Selenium. These tools helped teams increase the speed and scale of testing, and gradually the industry moved toward a hybrid model: some tests remained manual, while repetitive scenarios were automated. But, even with automation, the reality is that testing has never been fully automated.

Anyone who has worked in automation knows that not everything can or should be automated. Because of that, this blended model of manual and automated testing became the de-facto standard for the better part of the last decade.

Over time, newer frameworks like Playwright and Cypress improved execution speed and developer experience. But despite these improvements, the overall workflow didn’t fundamentally change. Testers still spent significant time designing and writing test cases, while frameworks mainly focused on executing those tests faster and more reliably.

And then AI showed up.

Advent of AI

For the purpose of this article, I’ll use the terms AI and LLMs interchangeably.

In the last couple of years, AI-powered tools have dramatically increased developer productivity. In fact, enterprise studies have shown that AI-assisted development can significantly accelerate engineering output, with some large-scale deployments reporting over 30% improvement in development cycle efficiency.

That’s great for innovation—but it also creates an interesting challenge: when developers start shipping code faster, traditional QA processes quickly become the new bottleneck.

And the industry is already feeling this shift.

Industry surveys show that more than 40% of QA teams have already integrated AI tools into their testing processes, and adoption is growing rapidly as organizations try to keep pace with faster development cycles.

At the same time, the broader enterprise technology landscape is moving heavily toward AI augmentation. Gartner predicts that by 2030, about 75% of IT work will be performed by humans working alongside AI, fundamentally changing how software is built, tested, and delivered.

This shift is exactly why AI has become so interesting for QA teams.

Now, when we talk about AI usage for QA teams, there are a lot of things AI can help with, some of the most basic one being automated test creation (which makes sense given how good modern LLMs have become at writing code) to things like auto healing scripts to reduce flakiness and more advanced use cases like error classification, root cause analysis and more smarter analytic insights.

There’s a lot to unpack there.

In this series of posts, I’ll explore several of these areas and how they can actually be applied in real testing workflows. But for this first article, I want to start with one of the most practical and immediately useful use cases:

How AI Can Help Accelerate Test Creation

The way we interact with LLMs is through a Prompt. It can be something as simple as the following prompt:

“Create automation code for the login page of following website – www.google.com”

As we all know by now, AI can create code very easily within a matter of few seconds but whether that code is:

- clean or messy,

- maintainable or brittle,

- flaky or stable and

- enterprise ready or demo quality

depends on a few factors like how you instruct the model, knowledge of the model etc.

Now, there are multiple ways to improve the quality, such as:

- Prompt Optimization

- RAG – Retrieval Augmented Generation

- Fine Tuning the model

and many more.

For this article, let’s start with the easiest one i.e. better prompting. In the next section, we are going to look at how to approach prompt creation.

Several people I have talked to told me that better prompting is about “asking nicely”. Well, it’s not 😀

Prompting is about engineering constraints.

The Art of Prompting

In this article, I’ll break down — in simple terms — how to structure the prompts so that we can get the desired high-quality output from the LLMs. Even if you’ve never written a prompt before, by the end of this, you’ll understand how to design one that consistently produces high-quality automation tests and I will give you a sample prompt example as well.

So, let’s get started.

1. Start by Assigning a Role – Because LLMs respond differently depending on the role you assign. If you simply say, generate a selenium test – you’ll get generic internet style output. But when you define, “Senior Automation Engineer”, you influence:

- Code structure,

- Best practice adherence and

- Design maturity to name a few.

You are essentially choosing the “experience level” of the engineer writing the test. This is the first lesson of prompting: Always define who the AI is supposed to be.

For our example – I will ask the LLM to be a Senior Automation Engineer.

2. Lock the Technology Stack – This I am sure, most of you have already guessed, because without this, we might not even be able to run the code generate by AI on our infrastructure and the generated code cannot be integrated in our existing test suites as well.

For our example – I will stick to Java & TestNG.

3. Enforce Architecture – Good architecture ensures the tests are maintainable and scalable in the long run. This becomes more important as your tests / test suites increase in size. Also, AI doesn’t know what architecture your existing test suites are using, so we need to define it explicitly to make sure we are getting results which can be very easily integrated with our existing work.

For our example – I will ask the AI to use Page Object model.

4. Restrict the Output Scope – AI is very good at generating stuff but if you don’t restrict it, sometimes it can be overwhelming and if you must do this exercise multiple times, then a lot of duplicate code can also be generated this way, which can be avoided by adding the right constraints.

For our example – I am going to ask LLM to only generate the TestNG @Test method and not the full class and the imports or page objects. This makes the generated code cleaner and easier to integrate with existing project.

5. Define Environmental Assumptions – It’s always good to define any environmental assumptions to reduce ambiguity like WebDriver is already initialised, execution is local vs cloud etc because ambiguity causes hallucinations. By defining assumptions, we stabilise the output.

6. Define Best Practices / Forbid Bad Practices – It’s just like telling a junior automaton engineer on what things they should and should not adopt when building a test like – naming convention, Explicit waits instead of Thread.sleep etc.

If there’s a bad practice you don’t want — explicitly forbid it.

7. Demand Assertions – Without explicit instruction, AI sometimes generates scripts that perform actions but never validate results. That’s not a test, that’s a script. If validation isn’t demanded explicitly, it may sometimes be skipped by the AI.

So, always require outcomes, not just actions.

8. Handle Missing Information Gracefully – This I believe is maybe the most important part of all, when we start prompting for test creation, we will most of the time always miss some or the other info and without telling this to the AI, it may invent strange behaviour silently, which can then become difficult later to debug and maintain. So, it’s always good to make sure that the missing info is highlighted to us so that we can keep improving our prompts continuously.

9. Any Additional Context – Now that we have defined the key parts of the prompt, this would be a good place to add any additional context which might be relevant to your existing framework or to the way you define your tests etc.

10. Return Type – If you want the LLM to return the result in a desired format, it’s always best to define the return type along with an example, so that our LLM knows how we are expecting the info to be returned. This helps in reducing the manual overhead while incorporating the generated code with our existing tests.

11. Scenario and Test Data – And lastly, we need pass a scenario for the LLM to work with – and probably some test data!

Sample Prompt

Now, that we have defined the structure of the prompt, let’s follow these steps and try to build a prompt that works. In the below example, I have tried to use the above structure and created this sample prompt for you to get started:

You are a senior automation test engineer with deep expertise in Selenium and TestNG.

Your task is to convert the provided business scenario into a production-ready automated test method.

STRICT REQUIREMENTS:

1. Language: Java

2. Framework: Selenium & TestNG

3. Design Pattern: Page Object Model (POM)

4. Output: Generate ONLY the TestNG @Test method (do NOT generate the full class, imports, or page object classes).

5. Assume: -

WebDriver instance is already initialized and available as: driver

Page Object classes already exist and can be instantiated using the driver

Local execution environment

6. Use explicit waits (WebDriverWait). Do NOT use Thread.sleep().

7. Include meaningful assertions using TestNG Assert class.

8. Use clean and readable code.

9. Use proper method names and variable names.

10. Add brief comments explaining each logical step.

11. Use realistic page object method calls (e.g., loginPage.enterUsername(), homePage.searchProduct(), cartPage.getCartCount()).

12. If test data is required, define it clearly inside the method.

13. If any details are missing in the scenario, make reasonable assumptions and mention them in comments.

14. Do NOT generate pseudo-code. Generate executable Java code only.

Additionally: -

Identify and clearly implement validation steps.

Handle synchronization using WebDriverWait inside the test method if needed.

Ensure the test method is robust and readable.

Return only:

@Test public void <meaningfulTestName>() { // implementation }

---

SCENARIO:

Open the login page “www.xyz.com”

Enter "test" in the username & "test" in the password field

Click “Login”

Expected: User is redirected to dashboard or home page (session started)

Next Steps

Feel free to make any modifications to the above prompt as you see fit to make it work for your requirements.

In this article, we discussed one of the ways of improving the quality of the generated code from AI but in the upcoming blog posts in the series, we will deep dive into some of the other aspects like choosing the right model, RAG, fine tuning etc.

In the meantime, feel free to explore some of our other articles –

AI in Software Testing: Hype, Reality, and Where Teams Actually See ROI

You Might Also Like

But Where Are You Going to Run All of Those Tests?

Something interesting is happening in QA teams right now. AI…

Virtual vs Real Devices: What Actually Matters in Mobile Testing

If you’ve spent any time testing mobile apps, you already…

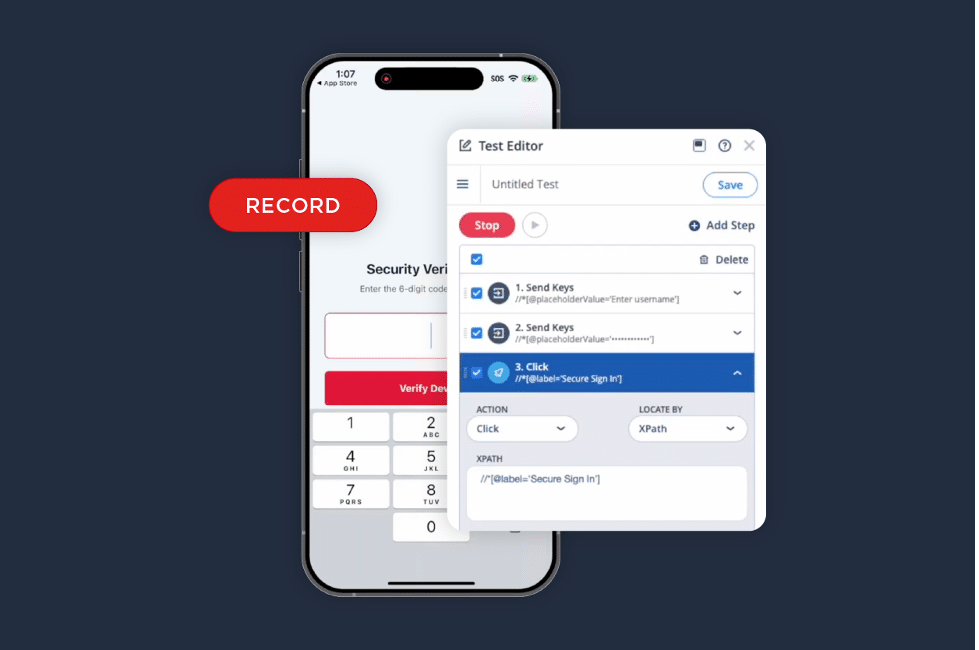

iOS Test Recorder: A Faster Way to Turn Validation into Automation

We listened to your feedback. The iOS Test Recorder is…