Catalyst Blog

Featured Post

SEARCH & FILTER

More From The Blog

Search for

Category

Cyber Resilience Act Compliance & Application Security

Most organizations approaching the Cyber Resilience Act are investing in…

How Digital.ai Deploy Makes GitOps A Reliable, Governed Model

Executive summary Deploy 26.1 introduces a tightly scoped GitOps capability…

Healthcare Application Testing: Why Failures Escape Detection

Why Critical Healthcare Application Failures Often Escape Testing Picture this: …

From App Store to Clone: How AI Turns Your .ipa Into a Blueprint

AI – Accelerating Reverse Engineering Every iOS app you ship…

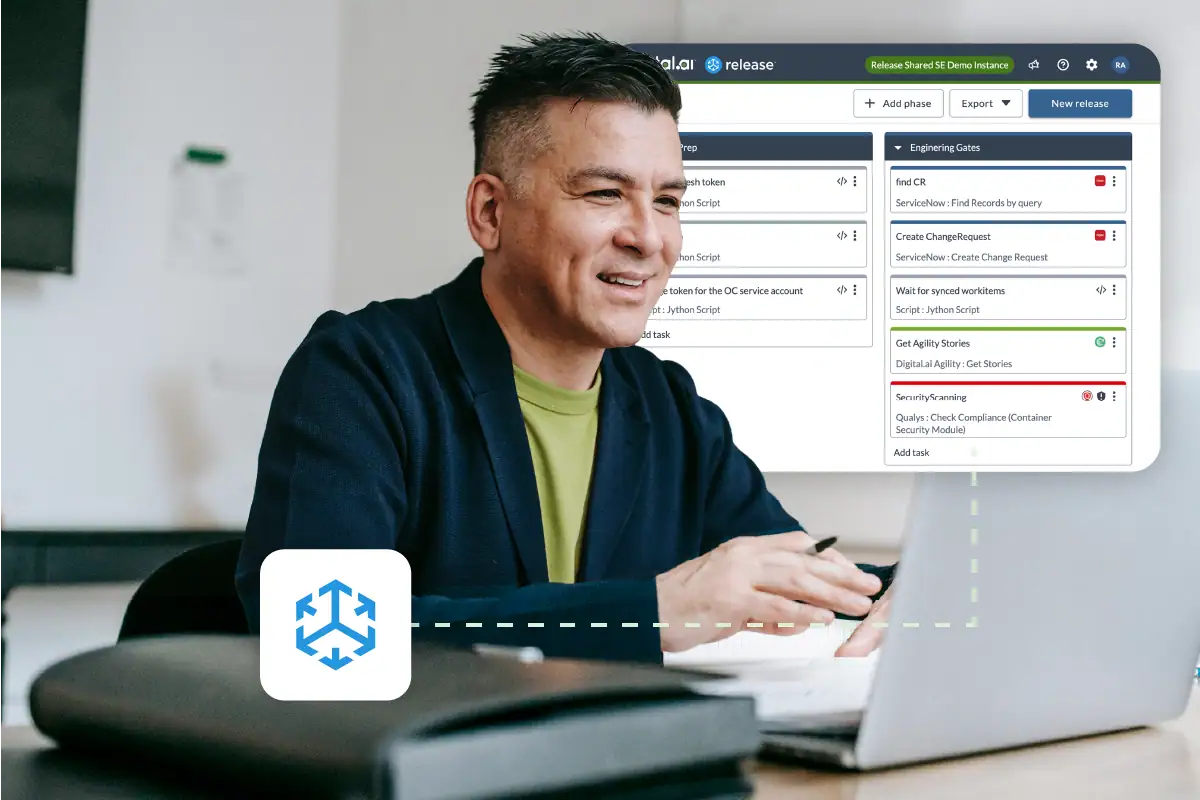

New SaaS Platform for Digital.ai Release

Overview – Release SaaS Digital.ai’s Release SaaS Platform is a…

Air-Gapped Testing Without Tradeoffs: Secure & Scalable

Secure Doesn’t Mean Slow: Modernizing Application Testing in Air-Gapped Environments…

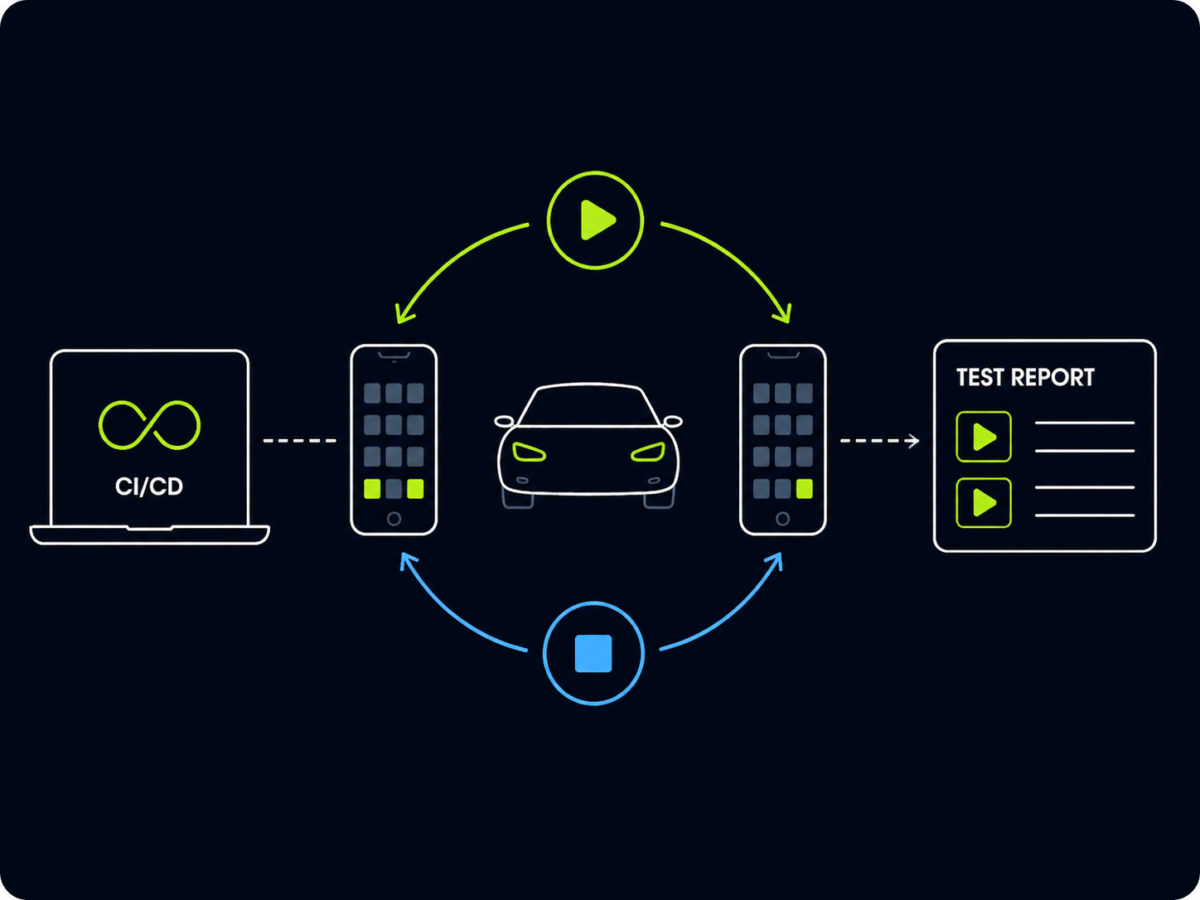

How to Start and Stop Automotive Projection in Appium Tests

Control When Your Test Enters and Exits Automotive Mode —…

Reducing Release Risk in Financial Application Testing

How Financial Institutions Reduce Release Risk Without Slowing Down Delivery …

The Fourth Wave: When AI Writes the Code — and Hacks It

We’ve crossed a meaningful inflection point in the evolution to…