Catalyst Blog

Featured Post

SEARCH & FILTER

More From The Blog

Search for

Category

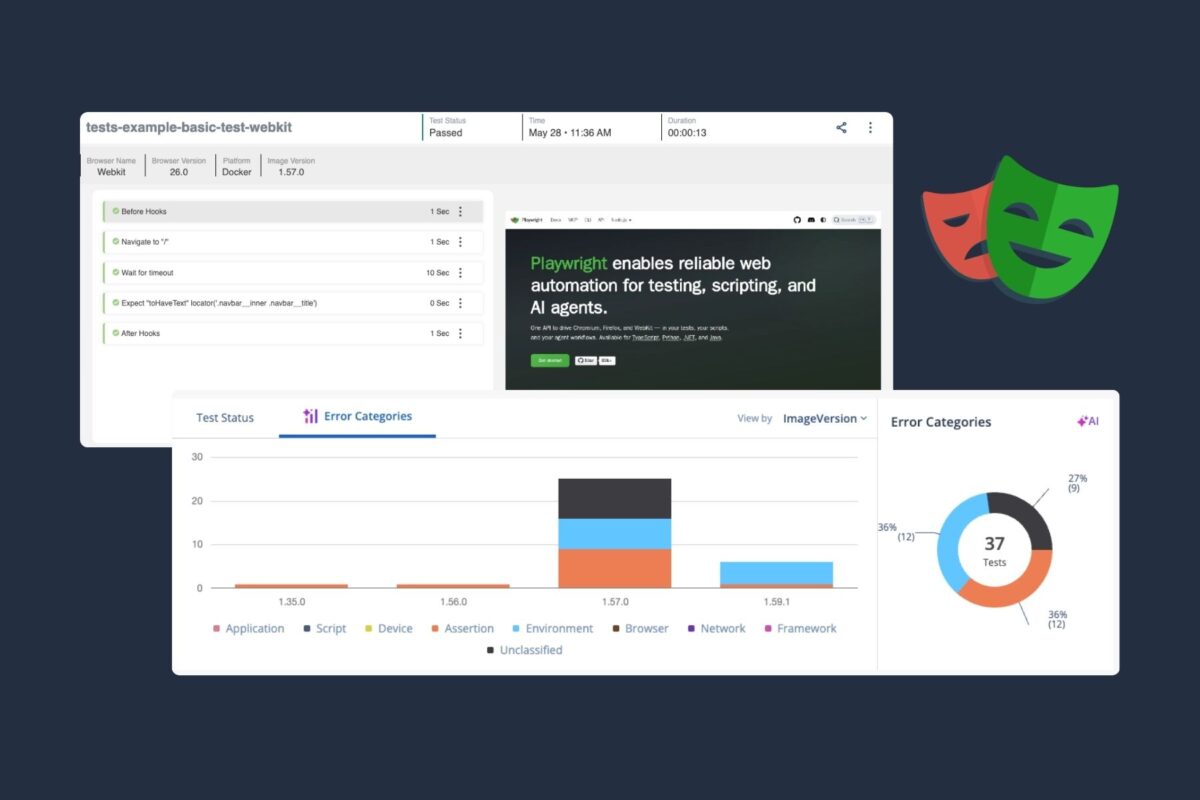

How Teams Are Scaling Playwright Beyond Local CI

The numbers back it up. Adoption among professional QA teams…

The Three Hardest Arguments Against White-Box Cryptography–and Why They Miss the Point

In part 1 of the series , we looked at where…

The myth of “rip-and-replace” software delivery in regulated enterprises

In regulated industries, the pressure to “modernize the delivery toolchain”…

Why Healthcare Workflows Are Hard to Test

In healthcare applications, the workflows that matter most are often…

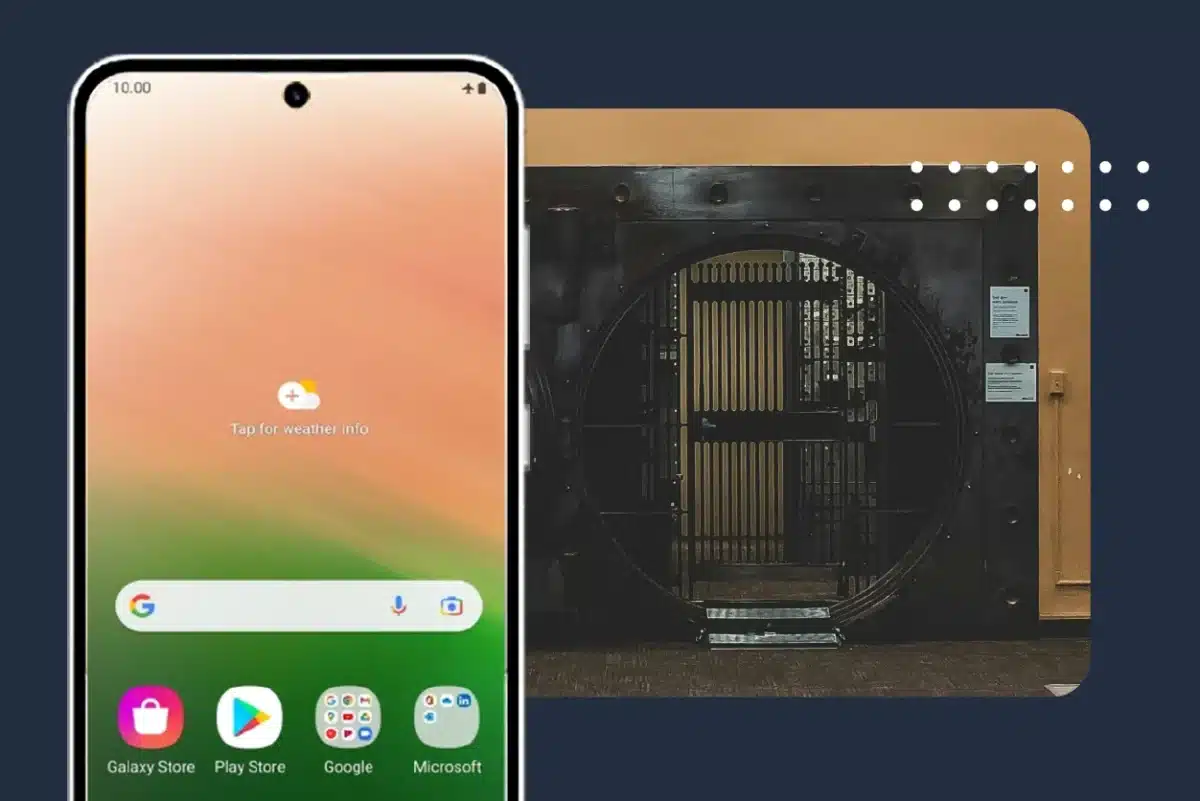

Your Hardware Security Is Working. That’s Not the Problem.

We hear a version of this objection regularly: “We’re already…

Migrating from Jira Data Center for Regulated Enterprises

Understanding Jira Data Center end-of-life Jira Data Center is where…

Cyber Resilience Act Compliance & Application Security

Most organizations approaching the Cyber Resilience Act (CRA) are investing…

How Digital.ai Deploy Makes GitOps A Reliable, Governed Model

Executive summary Deploy 26.1 introduces a tightly scoped GitOps capability…

Healthcare Application Testing: Why Failures Escape Detection

Why Critical Healthcare Application Failures Often Escape Testing Picture this: …