Catalyst Blog

Featured Post

SEARCH & FILTER

More From The Blog

Search for

Category

Why Healthcare Workflows Are Hard to Test

In healthcare applications, the workflows that matter most are often…

Your Hardware Security Is Working. That’s Not the Problem.

We hear a version of this objection regularly: “We’re already…

Migrating from Jira Data Center for Regulated Enterprises

Understanding Jira Data Center end-of-life Jira Data Center is where…

Cyber Resilience Act Compliance & Application Security

Most organizations approaching the Cyber Resilience Act are investing in…

How Digital.ai Deploy Makes GitOps A Reliable, Governed Model

Executive summary Deploy 26.1 introduces a tightly scoped GitOps capability…

Healthcare Application Testing: Why Failures Escape Detection

Why Critical Healthcare Application Failures Often Escape Testing Picture this: …

From App Store to Clone: How AI Turns Your .ipa Into a Blueprint

AI – Accelerating Reverse Engineering Every iOS app you ship…

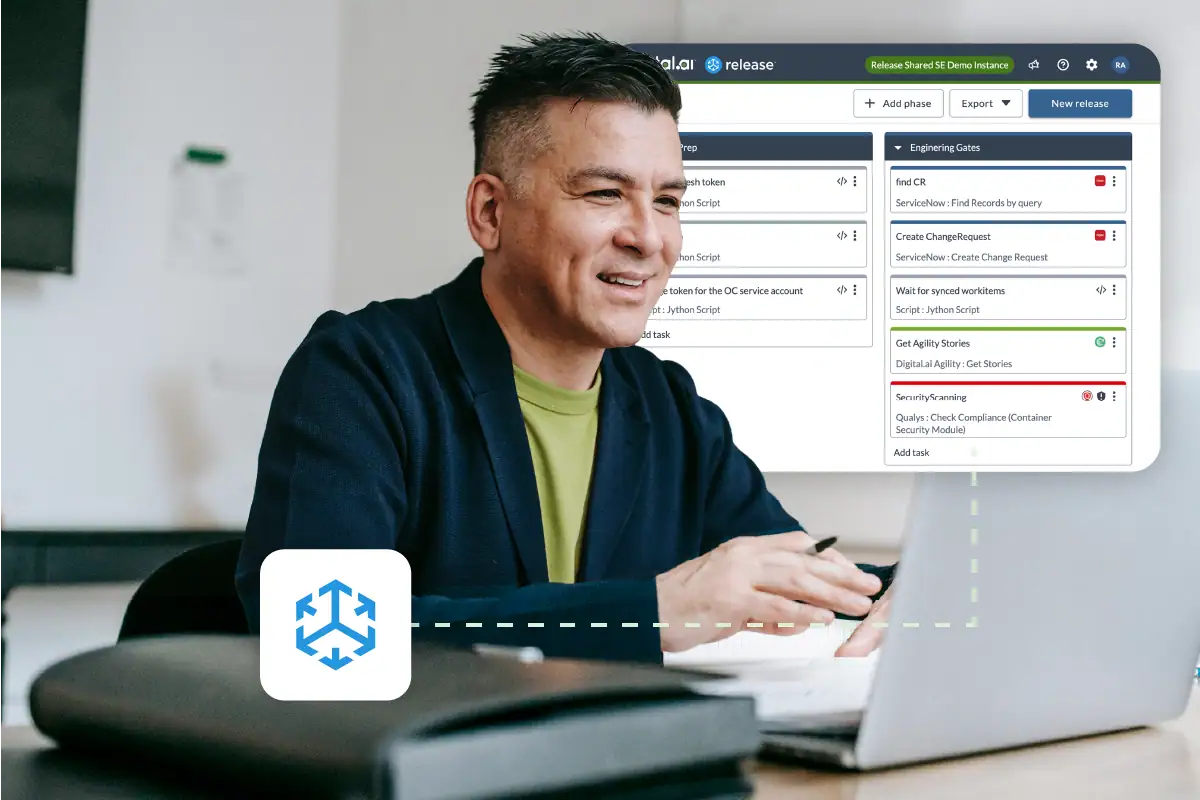

New SaaS Platform for Digital.ai Release

Overview – Release SaaS Digital.ai’s Release SaaS Platform is a…

Air-Gapped Testing Without Tradeoffs: Secure & Scalable

Secure Doesn’t Mean Slow: Modernizing Application Testing in Air-Gapped Environments…